My Multi-Agent System is Slower Than One Model: What Am I Doing Wrong?

I see it every month. A business leader comes to me, excited about their new "autonomous swarm." They have a Planner Agent, a Router, three specialized content agents, and a compliance verification layer. Their goal? Faster output. The reality? Their system takes 45 seconds to generate a single email that a single model could produce in three.

If your multi-agent architecture is slower than a single pass through GPT-4 or Claude, you aren't building a "system"—you are building a bottleneck. Let’s stop pretending that adding more agents automatically equals better results. It usually just equals more API latency and a higher bill.

Before we go any further: What are we measuring weekly? If you can’t answer that, you shouldn't be moving to multi-agent architectures yet. Let’s break this down.

What is a Multi-Agent System (In Plain English)?

Forget the science fiction. A multi-agent system is just a digital assembly line. Instead of one "generalist" trying to do everything, you break the task into discrete roles.

- The Planner Agent: The manager. It decomposes a large request into small, logical steps.

- The Router: The dispatcher. It looks at the step and decides which specific agent has the right tools or instructions to handle it.

- Specialized Agents: The workers. These usually have a narrow system prompt and specific function-calling capabilities.

When you put these in series, you create a "daisy chain." If Agent A waits for Agent B, who waits for Agent C, you are effectively running at the speed of the slowest member of the team. If your latency is high, it’s because you’ve created a serial process in a world that requires parallelization.

The Architecture Trap: Why You’re Slow

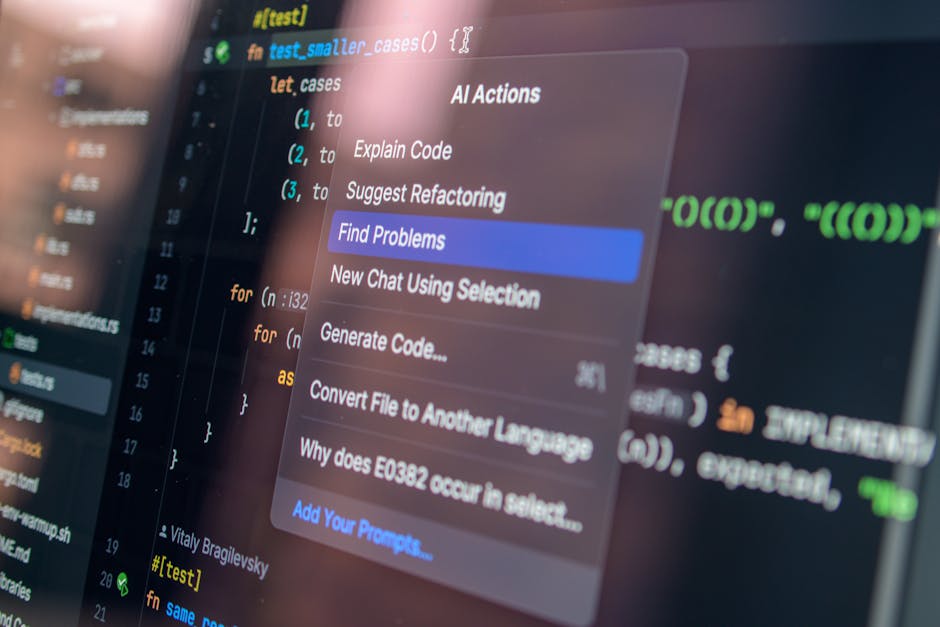

Most beginners build "chatty" architectures. The Planner Agent calls the Router, which calls an agent, which calls a tool, which calls a validator, which feeds back to the Planner. Every handshake is a network call. Every network call is 200ms to 2s of latency.

Here are the common architectural sins Discover more here I see:

- Sequential Bottlenecks: You are forcing steps that could happen at the same time to wait for each other.

- Over-Routing: Using a heavy "Router" model to decide where a simple task goes. If the Router takes 1.5 seconds to decide that a simple math problem should go to the Calculator Agent, the Router is your primary latency bottleneck.

- Lack of Parallelization: If you are generating a blog post, why are the title, the outline, and the meta-description being written one after another? These should be generated in parallel.

The Hallucination vs. Speed Trade-off

I hear developers say, "We added an extra verification step to stop hallucinations." That’s a noble goal, but let's be honest: Hallucinations are not rare. Pretending they are is how you end up with broken production systems. If you have an agent whose only job is to "check" if the previous agent lied, you’ve just added a massive latency tax.

Instead of endless verification loops, we use Retrieval and Verification:

- Retrieval: Don't make the agent "guess" the facts. Use RAG (Retrieval-Augmented Generation) to ground the output in your internal data *before* the agents start working.

- Verification: Move verification to the function level. Instead of a "Validator Agent," use programmatic checks (e.g., regex, schema validation, API response codes) that run in milliseconds, not seconds.

The Performance Measurement Table

If you aren't tracking these, you aren't managing a system—you're playing with a toy. Here is the baseline you need to establish this week.

Metric What it tells you Time to First Token (TTFT) How long your Router is taking to "think." End-to-End Latency Total time from user prompt to final output. Agent Handoff Latency The idle time spent between two agents. Retry Rate How often your system hits "too many retries" loops.

How to Fix Your Latency Bottlenecks

If your system is dragging, don't just throw more hardware at it. Follow this checklist to trim the fat:

1. Audit the Router

Is your Router a GPT-4 class model? Stop it. Switch to a smaller, faster model (like GPT-4o-mini or Claude Haiku) for routing tasks. A router doesn't need to be smart; it needs to be fast and accurate. If it’s struggling, simplify your routing categories.

2. Implement Parallelization

Audit your Planner Agent's output. Does Step 3 depend on the output of Step 2? If not, trigger them simultaneously. In modern frameworks, you can fire off three agents at once and aggregate their results. This will cut your latency by 30-50% immediately.

3. Kill the "Too Many Retries" Loop

If your agents are constantly failing and retrying, the prompt engineering is bad, or the system instructions are contradictory. Every retry is a 5-second penalty. Instead of letting agents "fix themselves," build a circuit breaker: if an agent fails twice, use a hardcoded default response or escalate to a human.

4. Prefer Schema over Prose

Stop asking agents to "verify if the output looks correct." Force them to output JSON. Use strict schema enforcement (like Pydantic or Instructor). This removes the need for a secondary "Checker Agent" entirely because the system will reject invalid responses immediately.

Final Thoughts: Don't Skip the Evals

I see teams skipping evals because they think they’re "too busy." That’s how you get production outages. Before you deploy a change to your agent graph, run a test case suite. If the new "optimized" version is 10% faster but 5% less accurate, you haven't made a net improvement.

Stop https://technivorz.com/policy-agents-how-to-build-guardrails-that-dont-break-your-workflow/ chasing the "Autonomous AGI" buzz. Build a deterministic, observable system. Measure your latency, kill the unnecessary handoffs, and stop using a sledgehammer to crack a nut. What are you going to measure tomorrow morning? Start there.